Tech | WAVESHAPER, Axios, and the Supply-Chain Backdoor Problem

A security episode on supply-chain compromise, malicious package behavior, JavaScript ecosystem risk, and attribution caution.

Listen

Listen on available platforms

Episode text

Transcript

Overview

Supply-chain security, malicious packages, backdoors, JavaScript ecosystem risk, and attribution caution.

Transcript

Coda: Imagine a piece of critical infrastructure that is, well, trusted by 100 million people every single week.

Odyssey: Right. It’s a massive scale.

Coda: Yeah. And now imagine someone just silently replaced a single, totally invisible gear inside it. Giving a state-sponsored group a literal skeleton key to the whole system.

Odyssey: Exactly. No alarms, no smashed windows. We are looking at a threat landscape today where the attack is already inside your perimeter. Because, you know, you actually invited it in through your own automated build pipelines. And that forces us to completely reevaluate how we handle dependency resolution. I mean, we’re dealing with an adversary that understands developer workflows just as well as the developers themselves do. Welcome to the Deep Dive. I’m so glad you are here with us today.

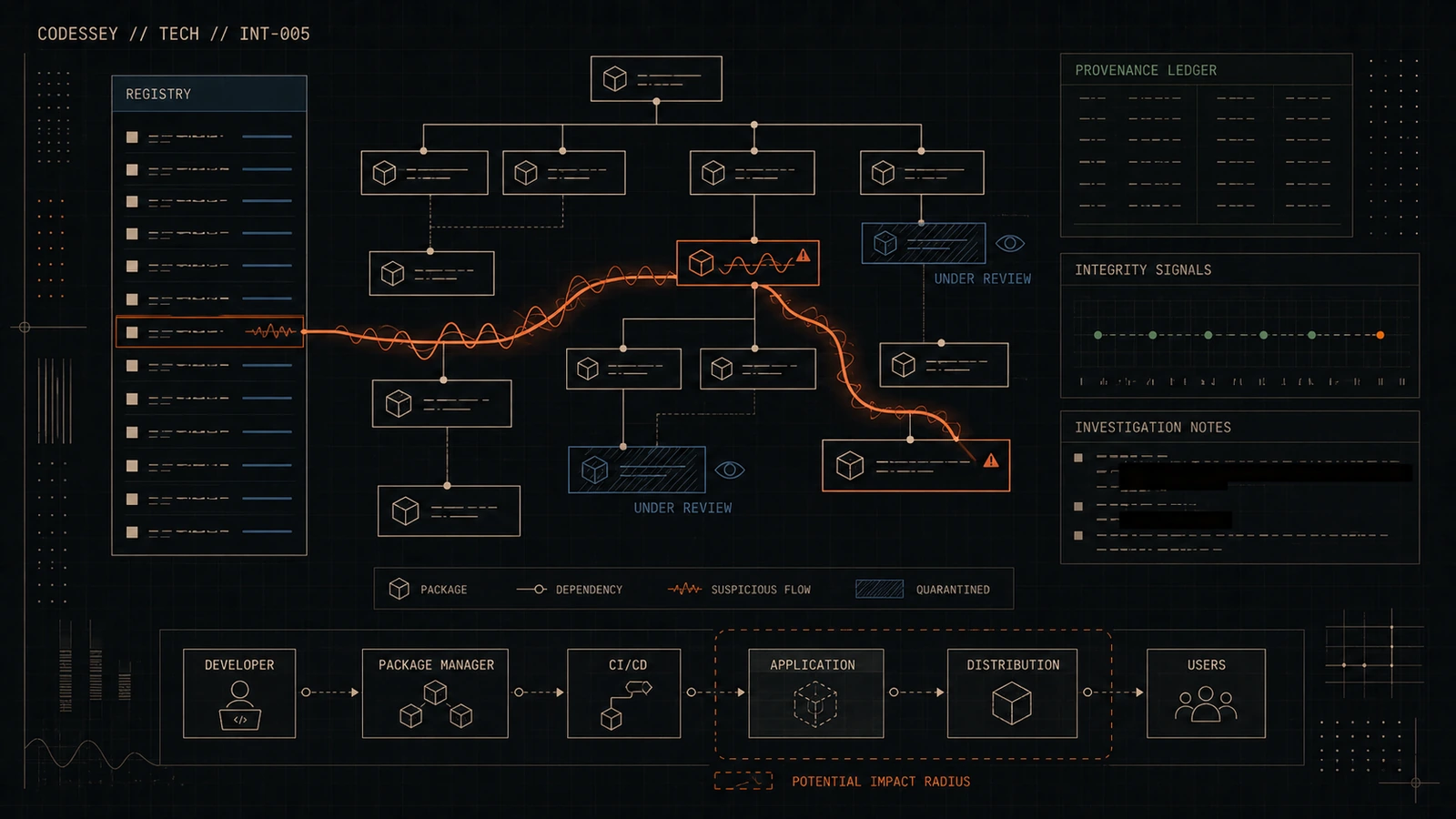

Coda: Our mission for this Deep Dive is to completely dissect a really highly sophisticated software supply chain attack. Yes. Specifically, we’re doing a technical analysis of a backdoor known as Waveshaper.v2. We’re going to look at how it systematically evades detection across Windows, MacOs, and Linux environments.

Odyssey: Right. Because we are looking at an active, highly successful campaign here. This thing managed to infiltrate one of the core building blocks of the modern web. It executes a multi-stage payload that actually dynamically adapts to whatever hardware it lands on. It’s fascinating.

Coda: It really is. So our source material for this Deep Dive is a detailed threat intelligence report. This was published jointly by the Google Threat Intelligence Group and Mandiant. They documented exactly how a specific threat actor compromised the Axios NPM package. And for anyone managing a node environment, you already know Axios. It’s basically the industry standard library for handling HTTP requests.

Odyssey: Which is why the stakes are so high here. According to the source, this package sees over 100 million weekly downloads. 100 million. A week.

Coda: Right. That kind of volume, it transforms a standard library into critical infrastructure. Penetrating a package with that distribution footprint means your initial attack surface is functionally limitless.

Odyssey: Okay, so let’s unpack this. We have a library downloaded 100 million times a week, and an attacker just managed to slip a polymorphic backdoor right into it. Taking the 30,000-foot view here, the report outlines a multi-stage, multi-platform intelligence operation. They attribute this to a financially motivated group tracked as UNC-10L69. And the level of engineering that went into this is just, it’s staggering. Oh, absolutely. The way it probes its environment, implements custom string obfuscation, and deploys these OS-specific payloads.

Coda: It shows an adversary building deployment pipelines that rival actual enterprise systems.

Odyssey: Right. They are systematically dismantling the alarm systems from the inside.

Coda: Exactly.

Odyssey: So the mechanics of the initial breach, that’s where you really need to start. The report notes that between March 31, 2026, just after midnight UTC, and 3.20 a.m., the attackers compromised a maintainer account. Yes, for the Axios package itself.

Coda: Right. And they changed the associated email address to an attacker-controlled Proton.me address. I think it was a stap at Proton.me. Compromising a core maintainer account bypasses the entire concept of repository security. Because the platform just assumes it’s the real guy, right?

Odyssey: Exactly. The distribution platform implicitly trusts the cryptographic signature of the maintainer. So by gaining administrative control, the attackers could publish authenticated updates to the registry. Without triggering any upstream code review alerts. None at all.

Coda: But they didn’t replace the core Axios library, did they?

Odyssey: No, because that would immediately break automated test suites globally. People would notice.

Coda: Right. The build would fail. Alarms go off. So instead, they injected a malicious dependency. They added a new requirement to Axios versions 1.14.1 and 0.3.0.4. And this new dependency was called plain CryptoJS version 4.2.1.

Odyssey: Which, by the way, that naming convention is a calculated evasion technique. Oh, for sure. Because plain CryptoJS sounds totally harmless.

Coda: Right. It mimics the naming conventions of standard cryptographic utility libraries. So when it’s buried deep inside a package-lock.json file that has, you know, hundreds of other dependencies. It just blends right into the background noise. A module ostensibly handling basic cryptography for an HTTP client wouldn’t raise any eyebrows.

Odyssey: Okay. So how does the trap actually spring?

Coda: I was looking at the execution flow, and it seems they leverage the NPM lifecycle scripts. Yes. Specifically the post-install hook.

Odyssey: Right. So the moment a developer runs NPM install Axios, the package manager pulls down Axios, and then it automatically pulls down that malicious plain CryptoJS.

Coda: And that’s when NPM automatically executes an obfuscated JavaScript dropper. It’s named setup.js, and it runs entirely in the background. Wait. So the post-install hook just runs automatically?

Odyssey: Automatically. The execution happens outside the standard application runtime. It executes with whatever privileges the user running the install command has. So if I run this on my local laptop, it has my access?

Coda: Yes. And if a CICD pipeline runs it, the script operates with pipeline service account privileges. Wow. It’s like ordering a high-end blender online, and hidden inside the shipping box is a tiny, silent robot.

Odyssey: That’s a good way to put it.

Coda: Yeah. And the second you open the flaps, the robot climbs out and starts mapping your house. You just wanted a blender, but the automated process of unboxing it activated the payload. And to take your analogy a step further, the robot doesn’t just map the house. It actively works to blind the security cameras while it does so.

Odyssey: Okay. Tell me about that. How does it blind the cameras?

Coda: Well, this dropper, setup.js, which the report calls the Silk Bell component, it operates in development environments that are usually heavily monitored.

Odyssey: Right. Lots of security tools scanning things.

Coda: Exactly. So it employs really aggressive static analysisization. This ensures that security scanners reading the JavaScript don’t flag the malicious routines. And we see that heavily in Silk Bell’s implementation of custom XOR and base64 string obfuscation, right?

Odyssey: Yes. They are actively scrambling the command control URLs and the OS-specific execution commands. So if an automated code scanning tool reads setup.js, it just sees gibberish. Basically. It fails to extract actionable indicators of compromise because the actual malicious strings are only dynamically constructed at runtime.

Coda: Right. When the code is actually running.

Odyssey: So the static engine just sees arbitrary math operations and encoded text.

Coda: Exactly. It forces the defender to move from static analysis to dynamic analysis. But this is where UNC-2069’s technical proficiency really shows. Because of how they load the modules?

Odyssey: Yes. The Silk Bell dropper dynamically loads core node modules like FSBS for file system, OS for operating system, and XSync for running commands. I noticed that detail in the source because standard top-level required declarations for those modules would be a massive red flag.

Coda: Well, a standard static parser building a dependency graph looks for those declarative imports at the top of the file. So if a random crypto library suddenly imports XSync to run system commands, The heuristic scanners would flag it instantly as a high-risk utility script. But by invoking them dynamically deep inside an obfuscated logic loop. Silk Bell hides its capability footprint completely. It hides it until the exact CPU cycle it actually needs to write to the disk or spawn a child process.

Odyssey: That is wild. The scanner analyzes the file, sees no requests for sensitive operations, and just lets it pass.

Coda: Yep. It allows the file to persist.

Odyssey: But the cleanup routine that follows the payload drop is arguably the most aggressive part of this initial stage. The anti-forensics.

Coda: Yeah. After dropping the secondary payload, setup.js actively tries to delete itself from the file system.

Odyssey: Which is standard for droppers, but then it does something else.

Coda: Right. It revotes the modified package.json file. It replaces it with an original, completely clean version that it had kept hidden under the file name package .md. It’s actively manipulating the local state of the repository. It wants to ensure any subsequent integrity checks pass. Wait, let me make sure I’m getting this. It breaks in, steals what it needs, and then acts like a digital burglar who not only sneaks inside, but carefully replaces the entire doorknob with a brand new untampered one.

Odyssey: That’s exactly what it does. So if an incident responder comes in after the breach and runs an integrity verification against the local node module’s directory, they won’t see anything wrong. They won’t. The hash of the package .json will perfectly match the clean upstream version.

Coda: That is just terrifying.

Odyssey: It really raises an important question regarding standard incident response methodologies. Because we rely so much on file hashes.

Coda: Right. If your detection engineering relies primarily on disk-based artifact preservation, an adversary doing localized state reversion renders your playbooks totally obsolete. Because by the time the alert fires for the weird network traffic, the initial deployment vector has literally overwritten its own source code.

Odyssey: Exactly. The evidence is just gone. Wow. Okay, so the Silk Bell delivery vehicle handles the static evasion, it drops the payload, and it scrubs its own tracks. And then the architecture of the host machine dictates everything that happens next.

Coda: Right. Because the JavaScript dropper checks the operating system first. When it analyzes the OS module output and determines it’s sitting on a Microsoft Windows environment, the whole methodology shifts. It has to, right? Because Windows security is a completely different ballgame. Entirely. Obfuscating a node script requires vastly different techniques than masking a malicious executable from a modern Windows EDR sensor.

Odyssey: Right. And EDR, an endpoint detection and response tool. Those are actively monitoring kernel-level telemetry, looking for weird process behaviors.

Coda: Yeah, specifically ETWTI telemetry. So according to the report, the dropper actively hunts for the native PowerShell.x binary on Windows. But crucially, it doesn’t execute it right there in its normal folder. No, it copies the legitimate PowerShell executable and saves that duplicate into the program data directory. And it renames it to WT.xe.

Odyssey: Which is a brilliant exploitation of process name heuristics. Because WT.xes is the standard executable name for Windows Terminal.

Coda: Exactly. Many EDR configurations maintain heavily optimized, really strict rule sets that specifically watch PowerShell.xe. Because PowerShell is used in almost every attack these days.

Odyssey: Right. They monitor it for anomalous child processes or weird network connections out to the internet. So it’s basically putting on digital camouflage. By renaming PowerShell to WT.xe.c, the malware just slips right past signature-based EDR rules that are hard-coded to flag the word PowerShell. It’s hiding right in the shadow of Windows Terminal. The EDR sensor processes the execution, sees a Microsoft signed binary named WT.xe, and just ignores it. Pretty much. It drops the telemetry because it matches the expected profile.

Coda: It just looks like a developer opening a command prompt. I mean, that is just so clever. And that camouflage extends right to the network layer, doesn’t it?

Odyssey: It does. When that disguised PowerShell process initiates its next-stage download, it uses curl.

Coda: But the source report specifies a very interesting detail about the POST body of this request.

Odyssey: Yeah, it’s formatted precisely as packages.npm.org slash product1. It’s burying the C2 beaconing inside decoy network traffic. Designed to mimic standard NPM registry communications.

Coda: Exactly. Think about it from the perspective of a SOC analyst reviewing proxy logs.

Odyssey: Right. They see a machine that they know does no development. And it’s reaching out with a payload string that references NPM packages. It perfectly matches the baseline behavioral profile of that specific host machine. You wouldn’t even look twice at that. You wouldn’t. Now, the payload pulled down by that decoy traffic is a script. It gets saved into the AppData temp directory. The report logs it as a random number.ps1 in the temp directory.

Coda: Yes. And the execution command passed to our fake Windows terminal process. The wt.exe utilizes both hidden and execution policy bypass flags.

Odyssey: Okay. Execution policy bypass. Running that via a renamed executable allows it to override local script execution restrictions, right?

Coda: Yes. And it does so without triggering the specific AMSI hooks that might fire if the standard PowerShell attempted that same override. So it’s completely silent and then it sets up persistence.

Odyssey: Right. Following successful execution, it establishes persistence using dual methods. It creates a hidden batch file in the program data directory called system.bat. And then it adds a corresponding entry to the current version run registry hive.

Coda: Which is how things start up automatically when Windows boots.

Odyssey: But the naming convention for that registry key, I love this part.

Coda: It is pure psychological manipulation. Aimed directly at the IT team.

Odyssey: Yeah. They named the registry entry Microsoft update. All one word. They are absolutely banking on alert fatigue. Oh, for sure. A system administrator reviewing auto run keys during a routine audit is going to see hundreds of entries. They will likely skip right over an entry labeled Microsoft update. Because the persistence mechanism is hiding behind the perceived legitimacy of routine OS maintenance tasks. Who wants to break Windows update, right?

Coda: Exactly. You leave it alone.

Odyssey: So the Windows disguise is brilliant. It relies on the registry and EDR blind spots. But none of that architecture exists if the developer is using a Mac.

Coda: Right. Apple Silicon is a completely different ecosystem. So UNC-10069 had to build a parallel evasion engine just for MacOS. And the source notes that when Silk Bell detects MacOS, it shifts away from all that. It moves to native Unonext tooling. It utilizes bash and curl to pull down a compiled MacO binary.

Odyssey: Which is the standard executable format for Macs.

Coda: But the directory targeting here is what’s really fascinating. It drops this MacO payload into the library caches directory. Specifically under the name com.apple.act.mond.

Odyssey: Okay. Break that down for us. Why that specific pack?

Coda: Well, the library caches directory is notoriously noisy.

Odyssey: It is saturated with transient application data. Files are created and deleted there constantly. So it’s a great place to hide. Yes. And furthermore, utilizing reverse domain name notation, the com.apple structure, that directly mimics legitimate Apple background services. Because Apple demons rely heavily on that specific naming structure.

Coda: Right. So an automated script or even an analyst reviewing running processes, they see com.apple.act.mond running silently via… And they just assume it’s some obscure proprietary system cache monitor from Apple.

Odyssey: Exactly. They assume it’s a kernel helper and they move on. And it establishes a similar network decoy on the Mac, right?

Coda: It modifies the POST body to packages.npm.org slash product zero. The structure is identical to the Windows traffic. They’re simply iterating the product identifier. Product one for Windows, product zero for Mac. It’s just tracking the target operating system on the C2 backend. Yes.

Odyssey: So then, moving to Linux environments, the payload delivery shifts yet again. Instead of a compiled binary, the dropper pulls down a Python script. And it just writes it to the temp directory as lead.py. And the network decoy here increments to product two.

Coda: Right. Python is ubiquitous across virtually all enterprise Linux distributions. It provides a native, highly capable runtime environment. Without the attacker needing to manage architecture-specific compiled binaries for every flavor of Linux.

Odyssey: Exactly. But here’s where it gets really interesting for me. The Windows attack relies on copied binaries, registry manipulation disguises. Mac OS attack perfectly mimics proprietary Apple daemon structures.

Coda: Right. But on Linux, they are just tossing a Python script into the universally writable temp directory. Why does UNC-169 implement such a drastically simplified deployment path for Linux compared to the heavily engineered evasion tactics on Windows?

Odyssey: Well, threat actors allocate their engineering resources based on the specific telemetry constraints of the target environment. What does that mean in practice?

Coda: Windows enterprise environments are heavily saturated with kernel-level EDR hooks. They monitor specific binaries very closely. Evading that requires elaborate process hollowing or image renaming, like we saw with WT.XE. And Mac OS requires blending into those strict application bundle structures.

Odyssey: Right. But Linux server and development environments, they are characterized by rapid execution and transient file system operations. Automated pipelines constantly writing to and executing from the temp directory. Yes. The sheer volume of legitimate execution noise occurring in slash temp book provides a massive smoke screen all on its own. Ah, so they don’t need to be as stealthy because the room is already crowded and loud.

Coda: Precisely. An adversary doesn’t need to engineer a complex process doppelganger if they can simply drop a script into a directory where thousands of automated undocumented executions happen every single hour. That makes total sense. The attack path is optimized for the specific operational culture of the OS.

Odyssey: Exactly.

Coda: Okay, so the deployment footprint is highly specialized per OS. But once those disparate droppers execute, they all converge on the same operational payload. Yes, the Waveshaper.v2 backdoor itself.

Odyssey: Right. We need to look at the capabilities of this backdoor. Because regardless of the host OS, Waveshaper.v2 initiates its beaconing routine to its command and control server. Identified in the report is sfrclack.com. Over port 8000, establishing a 60-second callback interval.

Coda: But the beacon implements a hard-coded user agent string that is very deliberate. Yes, this immediately caught my eye on the source. The user agent is Mozilla slash 4.0 compatible, Munzee 8.0, Windows N 5.1, Trident 4.0.

Odyssey: Which, decoded, means it identifies the client as Microsoft Internet Explorer 8 running on Windows XP.

Coda: Right. And why would a highly advanced threat actor deploying these cutting-edge polymorphic supply chain acts use an archaic user agent from, like, 20 years ago?

Odyssey: That should stick out like a sore thumb to a network defender analyzing proxy logs today. You would think so. And on a perfectly sterilized, highly modern network architecture, an IE8 string would immediately trigger a high-severity alert. But most networks aren’t perfectly sterilized, are they?

Coda: Not at all. Massive enterprise networks, especially those with legacy manufacturing equipment or old internal HR infrastructure, they routinely generate anomalous traffic. From deprecated systems that literally cannot be updated.

Odyssey: Exactly. Threat actors deploy archaic user agents to blend into that specific category of ignored legacy network noise. Oh, wow. So they are betting that the SOC team will classify the beacon as just another misconfigured internal server rather than a modern external threat. They’re banking on it being categorized as tech debt and ignored.

Coda: That is devious. And what’s fascinating here is the technical evolution of this backdoor. The Threat Intelligence Group previously tracked WaveShaper as a MacOS and Linux-specific tool using lightweight, raw binary protocols. But version 2 transitions the entire C2 communication infrastructure to JSON.

Odyssey: Which is a huge upgrade.

Coda: It is. The shift to JSON payloads allows for vastly expanded system telemetry collection.

Odyssey: So they aren’t just holding open a dumb shell anymore.

Coda: Right. They are actively structuring data regarding the hostname, boot times, and comprehensive running process lists. It transforms the backdoor into a highly scalable intelligence-gathering platform. And the command subset supported by version 2 really reflects that maturity. It processes a kill command to terminate the agent, render to map local file systems, and run script designed specifically for decoding and executing AppleScript payloads on Macs.

Odyssey: But the command that demand intense scrutiny from this report is p-inject. Yes, p-inject. The source defines this as an in-memory portable executable injection routine.

Coda: Which fundamentally alters the detection surface area. I was looking at the explanation for this command, and it looks like it’s completely bypassing the hard drive. It runs entirely in the system’s RAM. Is this what they call fileless malware, or is it something else?

Odyssey: No, you have it right. It is the defining characteristic of fileless execution.

Coda: Okay. Walk us through exactly how that happens. When the C2 server issues a p-inject command, it transmits a secondary, arbitrary, binary payload over the network. But it doesn’t save it as a file. Correct. Waveshaper.v2 decodes this payload directly into volatile memory. Into the RAM.

Odyssey: Right. It then performs ad hoc signing to satisfy internal OS validation checks.

Coda: Yeah. Maps the portable executable structure, so aligning the sections to resolve the import address tables. And then executes the shellcode entirely within the memory space of an already running process. So because that secondary payload is never actually written to the disk, any security agent relying on static disk scanning is entirely blind to it. Entirely blind. The malicious code exists exclusively as electrical states in the RAM. It vanishes instantly upon a system reboot.

Odyssey: That’s incredible. And that capability allows UNC-2069 to silently deploy heavy destructive secondary tooling, right?

Coda: Things like credential scrapers or lateral movement frameworks. With virtually zero disk-based forensic footprint. You are forced to rely entirely on capturing anomalous API calls or performing live memory forensics just to even confirm the payload executed in the first place. The technical mechanics here, the AST parser evasion, the WT.XE subversion, the Mach-O caching, the JSON telemetry, and this memory resident PE injection. It represents an incredibly deep technical stack. It’s a highly capable adversary.

Odyssey: Right. But we need to connect this highly technical analysis back to the broader implications for the listener, for the organizations actually managing these environments.

Coda: Absolutely. We have to evaluate the so what of the intelligence report. Knowledge of these specific API calls is only valuable when applied to structural defense.

Odyssey: So the report attributes this entire operation to UNC-2069. They note that their activity dates back to at least 2018. Yes. And they observe direct infrastructure overlaps to make that attribution. Specifically tracking connections from an astral VPN node and an autonomous system number that the group has historically used. And if we connect this to the bigger picture, the source explicitly identifies UNC-1069 as a North Korea nexus threat actor. Driven primarily by financial motivation.

Coda: Right. They are mapping this campaign alongside recent massive software supply chain attacks, like the UNC-6780 attacks targeting trivia and checkmarks via PyPI and GitHub actions. The objective in those parallel attacks was deploying credential stealers to facilitate large-scale extortion and crypto theft, right?

Odyssey: Yes. When a group compromises an NPM package with 100 million weekly downloads, the volume of exposed API keys, cloud infrastructure tokens, and database passwords is just catastrophic. Because developers have all of those secrets locally on their machines to do their work.

Coda: Exactly. And the access brokering and credential theft facilitated by an attack of this magnitude, it fuels the entire secondary market for ransomware and extortion operations. It’s like finding out the municipal water treatment plant itself has been compromised. You trust the water coming out of your tap because you trust the facility it comes from.

Odyssey: Right. Developers inherently trust NPM. When you execute an installation command, you are operating on the fundamental assumption that the registry’s cryptographic signatures guarantee the code’s safety.

Coda: But the vulnerability here isn’t a buffer overflow or a misconfigured firewall. The vulnerability is the inherent trust model of the open source ecosystem itself. The adversaries are bypassing the perimeter by hiding inside the very tools we rely on to build our infrastructure.

Odyssey: Which is why the remediation guidance is so aggressive.

Coda: Yeah. The report details highly specific, actionable remediation steps for organizations that suspect they’ve been exposed to these Axios versions. The immediate directive is obviously to halt any upgrades to Axios versions 1.14.1 or 0.3, 0.4. But it goes deeper than just avoiding those versions. The foundational defense mechanism here is strict dependency pinning within the package lock.json file, right?

Odyssey: Yes. Organizations must configure their build environments to reject minor or patch version upgrades automatically. So you don’t just pull the latest version blindly.

Coda: Exactly. Ensuring only explicitly audited and approved package versions execute within the pipeline. The report also emphasizes utilizing tools to audit your dependency trees, specifically looking for the plain CryptoJS module, versions 4.2.0 or 4.2.1. And if that specific package is found in a development environment or a CICD server, the remediation guidance from the source is severe.

Odyssey: Yeah. You can’t just uninstall the package and assume the environment is secure, can you?

Coda: No. Absolutely not. Due to the P-inject memory execution capabilities and the active deployment of credential scrapers we talked about.

Odyssey: Right. The file list stuff. If the post install hook executed, you must assume total host compromise. So burn it all down. Pretty much. The host must be entirely rebuilt. And crucially, every single credential, secret, or token present in that environment’s memory space must be immediately rotated. Wow. Every token. Every single one. The report also mandates clearing local and shared NPM, YARN, and PNPM caches on all workstations. Yes. Because if the compromised plain CryptoJS package remains in the local cache directory, a developer rebuilding their environment might just inadvertently reinstall the malware locally.

Coda: Even after the central repository has been sanitized.

Odyssey: Exactly. It would just pull from the local cache. At the network layer, they recommend blackholing the C2 infrastructure. So blocking srclack.com and the associated IP, which is 142.11.206.73. While simultaneously tuning EDR policies to heavily monitor Node.js applications spawning any unexpected child processes. And structurally, the source really pushes for the isolation of development environments. Running developer workflows inside restricted containers or sandboxes.

Coda: Right. Because that prevents a malicious post-install hook from breaking out and scraping plain text secrets from the broader host file system or OS keychain. It requires a fundamental shift toward zero trust principles applied directly to the local development environment, which is a huge cultural shift for most dev teams. Oh, absolutely. So what does this all mean? We started today with a poisoned open source package, Axios, sitting quietly in a trusted repository.

Odyssey: We analyzed how a silent JavaScript dropper executed via a post-install hook, manipulating local package states to thwart forensic integrity checks. And we traced its polymorphic execution paths. We dissected how it mimics Windows Terminal to subvert EDR heuristics. How it utilizes reverse DNS naming conventions to blend right into Maco’s caches. And how it leverages the transient noise of Linux temporary directories to just hide in plain sight. And then we broke down the Waveshaper.v2 payload itself, examining how it masks JSON telemetry behind legacy IE8 user agents, and utilizes Penject to execute arbitrary binaries directly in RAM without ever touching the disk.

Coda: It really leaves us with a critical architectural question.

Odyssey: It does. We rely on millions of lines of open source code to build the modern internet. When the very collaborative code-sharing communities we implicitly trust become the delivery mechanisms for highly advanced memory resident malware, where does the security perimeter actually exist anymore?

Coda: It’s a sobering thought. Is it possible that our foundational trust in the software supply chain is the single unpatchable vulnerability in modern software architecture?

Odyssey: Something for you to mull over the next time your CICD pipeline automatically resolves its dependencies. Thank you so much for joining us on this deep dive. Keep questioning your trust models and seriously, go auto your lock files. Stay secure.