Tech | Consistent Hashing Solves the Scaling Catastrophe

A technical episode on consistent hashing, cache churn, sharding, and how distributed systems scale without catastrophic remapping.

Listen

Listen on available platforms

Episode text

Transcript

Overview

Distributed systems, load balancing, cache churn, sharding, and scaling failure modes.

Transcript

Coda: Welcome to the Deep Dive, the place where we skip the endless research spirals and bring you the concentrated knowledge you need to really understand the big architectural decisions behind the modern internet. Today we are tackling what might be the single biggest, you know, existential threat to any successful online service. And that is scaling.

Odyssey: Exactly. Scaling. It sounds like a great problem to have. Your traffic is spiking. You need more servers. The dream scenario. But if you handle that wrong, the solution itself becomes the poison. It can lead to an immediate catastrophic system collapse.

Coda: That is the ultimate paradox, isn’t it? You add servers to survive, but the very act of adding them can kill the whole system.

Odyssey: Right. And today we’re diving deep into the ingenious solution to that exact problem, consistent hashing.

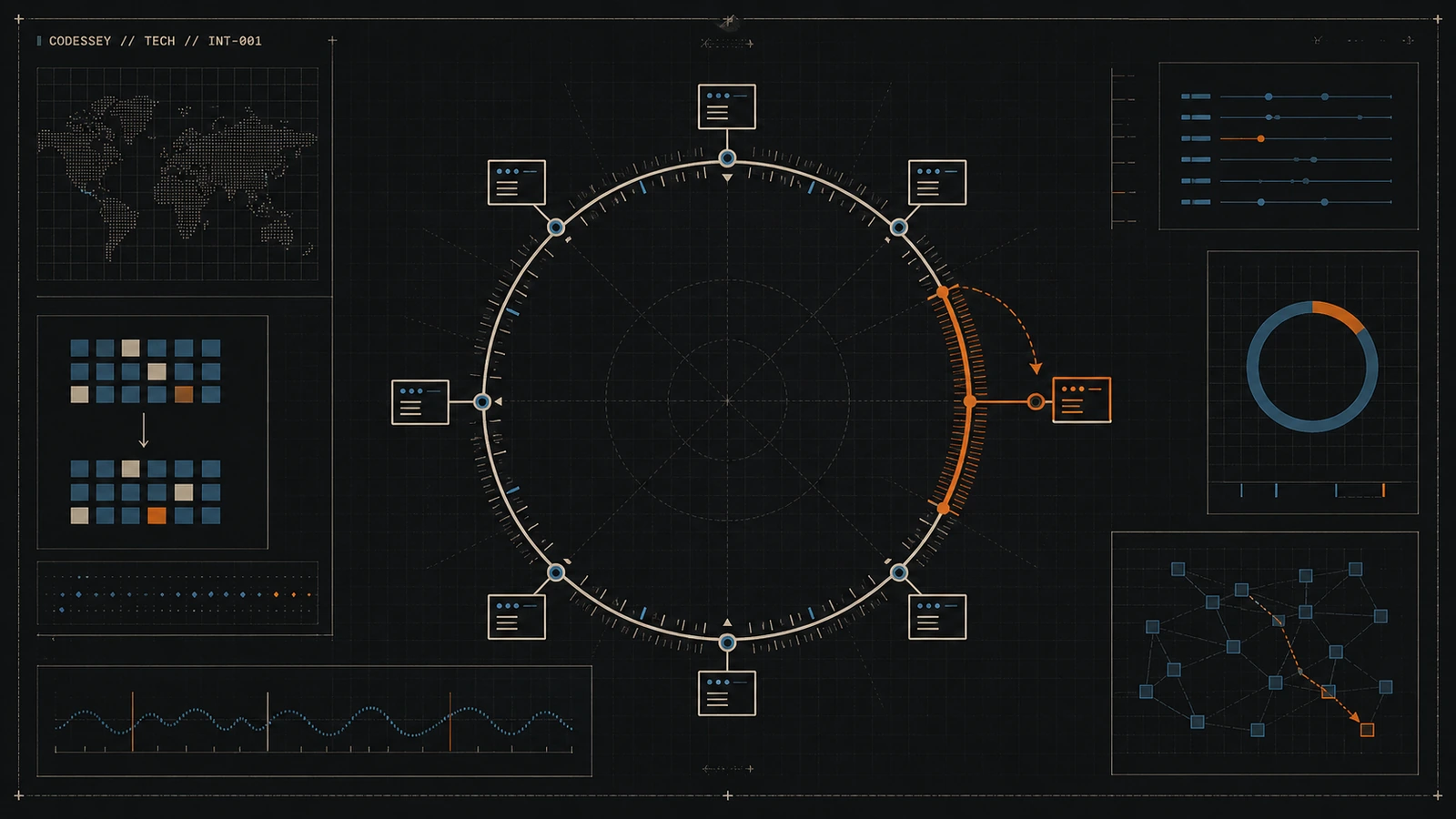

Coda: This isn’t just theory. This is a mechanism that allows giants like Amazon’s DynamoDB, Apache Cassandra, and massive CDNs to scale up or down dynamically, absorbing huge shifts in load without just imploding. And our mission today is to start right at the point of failure. We’re going to look at the naive approach first and then build up the architecture piece by piece. So we’ll visualize the hashring, understand how it uses geometry to provide consistency, and then break down the really complex but mandatory layers like virtual nodes that make it work in the real world.

Odyssey: We’re looking for that precise mathematical tradeoff that separates resilient scale from, well, inevitable collapse.

Coda: Okay, let’s unpack this. Let’s start with the problem, the catastrophe. Imagine you’re running a really popular distributed cache.

Odyssey: Okay. Your traffic just doubled. You need to add new servers, right?

Coda: Horizontal scaling, you have n servers, and you need to add one more. What’s the most obvious, the most intuitive way to decide which server a key should live on?

Odyssey: Well, the first instinct, the simplest math you can reach for, is just traditional modulo hashing.

Coda: Right. You take your key, let’s say it’s a user ID or a product SKU, run it through a standard hash function to get a big number. And then you just apply the modulo operator.

Odyssey: Yep. Based on the current number of servers, n. The formula is beautifully simple. Server index equals hash key mod n.

Coda: It really is simple. If you have 10 servers, n is 10. The result is always a number between 0 and 9, and that just maps directly to a server. It’s fast. It’s just basic arithmetic. You get a guaranteed 01 lookup time. It seems perfect. It seems perfect.

Odyssey: It is perfect, but only as long as n is static. The fundamental flaw is that the entire system of assigning keys is completely tied to n. It’s non-partition tolerant.

Coda: Exactly. It cannot tolerate any change in the number of partitions without massive consequences. And this is where the sources give that really shocking example that shows why this simple scaling can be fatal. Let’s use their numbers. We have nine nodes in our cluster.

Odyssey: Okay, nine nodes. Traffic demands, we scale up to 10. We’re only adding one server. It’s a modest, what, 10% capacity increase?

Coda: You’d expect minimal disruption. You would, but you’d be wrong. The entire mathematical domain has shifted. Because n changed. When n changes from 9 to 10, the remainder of almost every division operation changes. If you calculate the percentage of keys that have to be rehashed and moved, the formula is new one. New. So in our case, that’s 10, one.

Odyssey: Which is 90%. 90% of all existing keys must instantly find a new home. 90%. That’s basically everything.

Coda: Yeah. And you’re adding this capacity because you already have a traffic spike, which means your system is already pushed to its limit. Now you have to trace the catastrophic cascade failure this generates.

Odyssey: Okay, walk me through it. A client application asks for a key. 90% of the key space has just moved.

Coda: So the client hits the old server, gets a cache miss. And has to try the new server.

Odyssey: Right. But the moment the client gets that cache miss, it generates a synchronous request that hits the next layer down. The database. The origin server. And since 90% of your entire cache population just invalidated itself, you get this massive instantaneous wave of cache misses that turns into an overwhelming demand on the database. The database, which was already straining under the traffic spike.

Coda: Exactly. It suddenly hit with a connection storm. It starts exhausting its thread pools, hitting connection limits, latency spikes. And then, of course, user requests start timing out. So you added servers to save the system.

Odyssey: But the massive data churn, the OTA data churn, it just caused an immediate unavoidable collapse. That O1 lookup simplicity becomes totally useless because the operational cost is catastrophic.

Coda: So the entire point of consistent hashing is to eliminate that 90% data churn nightmare. To make sure that adding or removing a node is a smooth operation, not a suicide pact.

Odyssey: Precisely. Consistent hashing was introduced to achieve partition tolerance. It’s a distribution scheme that mathematically decouples the key assignment from N, the cluster size.

Coda: And that’s the monumental shift right there. The resulting benefit is quantifiable. Whether a node is added or removed, consistent hashing limits key relocation to roughly one N of the total keys. So let’s go back to our example, 9 adds to 10. Instead of moving 90% of the keys… You’re now moving only 110th, or 10%, of the keys. And that 10% is a controlled surgical migration. It allows the system to scale gracefully instead of just detonating itself.

Odyssey: Okay, so once we abandon that simple modulo math, we need a new foundation.

Coda: Right. And this is where the visualization becomes so critical. We’re moving from simple math to geometry. This brings us to the core concept, the hash ring. The hash ring is the foundational image you need to have. I want you to picture the entire output space of your hash function. So numbers from zero all the way up to the max integer value. For a 32-bit or 64-bit hash, say?

Odyssey: Exactly. And picture all those numbers arranged as a continuous, fixed, circular dimension.

Coda: The key word here is fixed.

Odyssey: So the circle is static.

Coda: It doesn’t stretch or shrink if we add or remove servers.

Odyssey: That is the crucial decoupling right there. The size is independent of the cluster size. Now, we use the same uniformly distributed hash function to map two very different things onto this fixed circle. What are they?

Coda: First, we map server identifiers, like their IP address or host name. And second, we map the data keys themselves.

Odyssey: Okay, let’s track that. I have server A, server B, and server C. I hash their IDs, and they just land on three random-looking spots on this ring.

Coda: Yeah. Then I hash a specific data key, key K, and it lands on another spot. And since the hash function is uniform, everything should be pretty randomly scattered.

Odyssey: That’s the idea. The distribution of servers on the ring depends entirely on the hash of their IDs. And these scattered server points, they implicitly divide the ring into segments. So each server becomes responsible for a segment of that hash space.

Coda: Right. But how do we decide which segment a key belongs to?

Odyssey: This requires the rule of assignment.

Coda: Okay, what’s the rule?

Odyssey: It’s an elegant, simple, but crucial rule. The clockwise rule. To store or retrieve a key, you find its hash position on the ring. Then you just travel around the ring clockwise until you find the very first server node. And that server is the one responsible for the key.

Coda: That’s the one. It’s like finding the nearest post office, but only in one specific direction. Let’s nail this with a concrete example.

Odyssey: Yeah. Let’s say the ring runs from 0 to 10,000, just for simplicity. Key K hashes to position 1123. We start moving clockwise from 1123.

Coda: Right. Server X is at position 900. We skip it because we’re going clockwise. We passed it. Server Y is at 1500. We hit it first. So server Y stores key K.

Odyssey: Yep. And it doesn’t matter that server Z is at 5000 because Y was the first one you encountered moving in that direction. Got it. So server Y is responsible for all the hash base that starts immediately after its counterclockwise neighbor and runs right up to its own position. Any key in that arc goes to server Y. Perfect. So if I were explaining this to a friend, I’d say we ditched the volatile math, the mod n that depended on a changing number.

Coda: Right. And instead, we adopted a stable geometric assignment based on clockwise proximity on a huge fixed circle. That fixed reference frame is the source of the consistency.

Odyssey: That’s the perfect summary. It’s the move from algebra to fixed geometry that solves the problem.

Coda: Okay. So now we have to connect that to the big promise, minimal data movement when the cluster changes.

Odyssey: Right. Why does this geometric assignment translate into minimal churn?

Coda: Let’s trace it. The promise is only one n keys move. What happens when we add a new node?

Odyssey: Let’s call it snoo. When snoo is added, it lands randomly somewhere on the ring. And the critical insight here is that its insertion point only affects keys that were previously assigned to its immediate clockwise neighbor. It’s one clockwise neighbor, let’s call it sold.

Coda: Yep. And the effect is so incredibly localized because the clockwise rule defines ownership based on the next server you find. So before, keys in a certain arc would travel around and hit sold first.

Odyssey: Right. That arc was the segment between sold’s counterclockwise neighbor and sold itself. When snoo lands somewhere in that specific arc, it physically breaks that one segment into two. Ah, I see. So snoo creates a new smaller segment leading up to its own position.

Coda: Exactly. Any key that lands in that new, smaller segment, which previously would have gone straight to sold. Now find snoo first.

Odyssey: Precisely. Snoo effectively steals keys, but only from its neighbor downstream, sold. And importantly, the clockwise path for keys assigned to every other server on the ring remains completely unchanged. They still see the same neighbor they saw before. The locality of that change is what ensures 90% of your data stays put. It fulfills the promise of one end churn. That surgical precision is what we’re after. But what about the other side of the coin?

Coda: Fault tolerance. What happens if a node is removed or just fails?

Odyssey: If a server is failed, crashes, or you take it down for maintenance, all the keys it was responsible for have to be reassigned instantly. And the clockwise rule handles that automatically.

Coda: It does. And it’s equally localized. You just remove spailed from the list of active nodes on the ring.

Odyssey: So what happens to its keys?

Coda: The keys that were assigned as failed meaning, all the keys that found it as their first clockwise node, now just, they skip over that empty spot and automatically fall onto the next operational node in the clockwise direction.

Odyssey: So the entire load from the failed node is absorbed by a single adjacent successor.

Coda: That’s it. So both adding and removing a node result in this maximum localized disruption. It’s confined to just a single neighbor.

Odyssey: That’s the genius of it. But this brings up a performance question. We talked about O1 lookups for the naive hashing. That was its one big advantage.

Coda: Right. If my application has to conceptually walk around a ring of thousands of nodes, we’re back to a linear search.

Odyssey: That’s O1N, which is a performance killer. How do we make this decision fast?

Coda: This is the crucial implementation detail, the architectural trade-off. We avoid that slow linear search. We don’t physically traverse the ring.

Odyssey: So what do we do?

Coda: Instead, we map the node hash values into a really efficient ordered data structure. The sources mention a couple of common choices, a sorted array or a binary search tree. Ah, so we can search it quickly. We’re essentially looking for the ceiling element, the first server hash that’s greater than or equal to our keys hash.

Odyssey: Precisely. Because the node hashes are stored in a sorted structure, we can leverage a specialized search. The lookup complexity becomes O log N, logarithmic time.

Coda: Okay, let’s stop and really scrutinize that trade-off because this is a big one. We are giving up that lightning-fast O1 of naive hashing for O log N. We are. Is that actually negligible when you’re processing millions of requests per second?

Odyssey: Does the system start to feel sluggish?

Coda: That’s the critical question. And the magnitude of N really matters.

Odyssey: Yeah. If you have a small cluster, say N10 nodes, log base 2 of 10 is tiny. It’s like three or four operations.

Coda: Okay, not bad. If N grows to 1,000, log base 2 of 1,000 is still only about 10 operations. It’s still pretty small. And even if you’re running a giant cluster of, say, 100,000 nodes, log base 2 of 100,000 is still only around 16 or 17 steps. Wow.

Odyssey: Okay, so 17 steps versus one step. It’s measurable, but it’s a small, constant overhead. And it’s a price you are more than happy to pay to avoid that 90% chance of system implosion.

Coda: Absolutely. It’s a tradeoff of a little bit of operational speed for guaranteed system stability. And I assume the implementation has to handle the wraparound rate.

Odyssey: It does. If your key hash is, say, 9,500 in our 10,000 point ring, but the largest server hash is only 8,000. The search for a greater than value will fail.

Coda: Right.

Odyssey: So the implementation needs a check. If it fails, it has to instantly wrap around and assign the key to the server with the smallest hash value, the one closest to zero. To continue that clockwise search.

Coda: Yep. And that same mechanism ensures that managing the ring, adding or removing a node, also happens efficiently in O log N. Time to update that sorted index. So that layer of detail, the sorted data structure handling the wraparound, that’s what makes this conceptual geometric rule into a practical high-performance algorithm.

Odyssey: Exactly.

Coda: Okay. So we solved the rehashing nightmare. The core concept gives us localized churn, but the sources highlight another major problem. The basic algorithm still has a severe performance-killing vulnerability, geometric clustering.

Odyssey: This is the weakness of pure randomness on a fixed circle. Because the server IDs are placed randomly, you will inevitably end up with nodes that just cluster together by chance. Imagine three servers, A, B, and C, all hashing into a tiny 5% segment of the ring.

Coda: Well, if they’re all bunched up like that, that leaves a massive 95% chunk of the ring space that is owned entirely by the next server clockwise. Let’s call it server D. And server D becomes an immediate crippling hotspot.

Odyssey: Right. It owns a disproportionately huge part of the ring. It gets overloaded, latency spikes, it’s about to fail, while A, B, and C are basically sitting idle. It completely defeats the purpose of load balancing and can still kick off those cascading failures we’re trying to avoid. So relying on the random placement of just a few physical server points is too fragile. We need a way to introduce statistical uniformity, even if the placement is random.

Coda: And the solution for that is the introduction of virtual nodes or V nodes.

Odyssey: Okay, what’s a V node?

Coda: The concept is that a V node is just a virtual representation of a physical server. So instead of placing physical server A on the ring once… You give it multiple identities. You assign it dozens or even hundreds of virtual identities. Each V node gets its own unique hash and each one is distributed randomly across the ring. So a physical server A might be represented by V node A1, V node A2, all the way up to, say, V node A128.

Odyssey: And they’re just scattered all over the ring.

Coda: Precisely. By scattering a large number of V nodes for every physical server, we shift the distribution problem away from geometric dependence. From the luck of where three points happen to land. To a robust statistical mechanism.

Odyssey: This is where the law of large numbers kicks in. Because if you scatter hundreds of points per server, even if a few V nodes happen to cluster, the overall distribution of the total arc length owned by all 100 V nodes for that server will mathematically trend towards the uniform average.

Coda: That is the core statistical robustness. It smooths out the rough randomness of individual placements.

Odyssey: The keys are guaranteed to be spread much more uniformly among the many V nodes, which in turn ensures uniform load across the actual physical hardware. This V node structure, though, it sounds like it adds a lot of complexity. I have to push back a bit on this tradeoff. It feels like we’ve solved the load balancing problem by introducing a whole new layer of state to manage. It’s a mandatory tradeoff. V nodes are non-negotiable for production stability, but they absolutely introduce state proportional to OV times N virtual nodes multiplied by physical nodes.

Coda: So if you have a cluster of 1,000 physical nodes, and the standard is, say, 128 V nodes per node.

Odyssey: Which is pretty common. That means you’re now managing 128,000 hashed node identifiers in your sorted array.

Coda: That’s a significant memory footprint just for the index.

Odyssey: It is significant, but it’s cheap compared to the alternative. The memory and CPU cycles you spend managing those 128,000 entries are minimal compared to the cost of one major server failing from a hotspot and triggering data loss or a cascading failure. The complexity is a necessary overhead.

Coda: It is. It’s what you pay for statistical fairness and operational stability. So let’s talk about how V nodes specifically improve fault tolerance. Without them, we said a failed node dumps its entire load onto one single neighbor. With V nodes, that massive load dump is avoided. When the physical node fails, its load is scattered across the ring, represented by its 128 V nodes, for example. And since those V nodes are independent points on the ring…

Odyssey: Each one gets reassigned to its own distinct clockwise neighbor. Ah, so the failed load is automatically distributed or scattered across maybe 128 different surviving physical servers instead of being concentrated on just one.

Coda: Exactly. This smooth distribution is key. The sources note this can limit the extra burden on any single remaining server, ensuring one failure doesn’t overwhelm a successor and start a chain reaction. This scattering ability also makes V nodes essential for managing a heterogeneous cluster, which the sources call weighted consistent hashing.

Odyssey: Right. If you have a mix of hardware-no-powerful machines and older, less-capable ones, you can’t just distribute the key space equally. The old ones would fall over. The solution is to use V nodes as a weighting mechanism. The count of V nodes you assign to a server determines its weight. So if server X is twice as powerful as server Y, you just give it twice as many V nodes. Server X gets 200. Server Y gets 100.

Coda: And that’s precisely how you decouple the hardware reality from the logical partition size. Server X will automatically take on twice the key space, maximizing your cluster’s utilization without risking overload on the weaker machines. That flexibility is fundamental to platforms like DynamoDB and Cassandra, isn’t it?

Odyssey: It is. It’s how they manage their massive, constantly evolving hardware fleets. It’s clear that consistent hashing optimized with V nodes is really a mandatory foundation for these massive, scalable systems. Where do we see its influence most prominently today?

Coda: Well, it’s the architectural spine of the NoSQL database world. Both Amazon DynamoDB and Apache Cassandra rely on highly refined, weighted CH with V nodes. And that lets them handle petabytes of data and stay highly available during rebalancing, which must be happening constantly. All the time as load shifts. And it’s crucial in the content delivery world, too. The CDNs.

Odyssey: Absolutely. CDNs like Akamai use it to distribute cached content and manage request routing across their globally dispersed edge servers. So that when you request a file, that request consistently lands on the same edge server.

Coda: Right. Which maximizes the chance of a cache hit and drastically reduces latency for you. And we mentioned distributed caching at the start. I saw the term Kedema mentioned. What’s its role?

Odyssey: Kedema is a widely adopted variation, especially in memcached client libraries. It essentially provides a standardized, reliable way to implement consistent hashing by pre-calculating and managing the VNode distribution. So it makes sure all the clients have a shared, efficient view of the ring?

Coda: Exactly. It guarantees keys land reliably on the correct server, which insulates the database from that cache churn when a node joins or leaves.

Odyssey: Okay, before we move on to alternatives, let’s circle back to the engine of this whole system, the hash function itself. We know we can’t use slow cryptographic hashes. What makes for an ideal hash here?

Coda: Speed and uniformity are paramount. You need a fast, non-cryptographic hash that produces a statistically excellent, uniform distribution across the entire hash space. Because if the hash function itself produces clustering, the VNode system has to work that much harder to compensate?

Odyssey: Right. The industry defaults for high-speed processing are generally Murmur hash or X-AX hash. They offer the speed you need without the computational expense of crypto.

Coda: Okay, so we’ve covered optimized, consistent hashing with VNodes. It’s complex, it’s resilient, and it gives you a log-in lookup. But not every system needs that level of complexity. Are there simpler alternatives?

Odyssey: Yes, and the choice is a strategic one based on what your system prioritizes. Simplicity, speed, or uniformity. We have two major alternatives to consider. Let’s start with rendezvous hashing, which is also called highest random weight, or HRW. HRW is conceptually simpler because it completely bypasses the need for the hash ring and VNodes. How does it work?

Coda: The mechanism requires every client to calculate a score for the key against every single available server. The score is usually a weighted hash of the key and the server ID. And the server that produces the highest score wins the key.

Odyssey: That’s it. It sounds incredibly uniform because every server gets a chance to bid for the key, so to speak. It provides superior innate uniformity, and you avoid all that complexity of managing VNode state. But that elegance comes at the cost of lookup speed. Because the client has to compute a hash against every single available server, n times.

Coda: Exactly. Basic HRW forces an ON lookup complexity. For large clusters, that’s prohibitive. You trade architectural simplicity and uniformity for slow lookups. So HRW is maybe better for systems where n is small, but extreme uniformity is critical. What’s the second alternative?

Odyssey: That would be the fixed slot partitioning model, which is the mechanism used by the popular Redis cluster. This sounds dangerously close to the old modulo hash we started with. How does it maintain stability?

Coda: The key space is partitioned into a fixed static number of slots. Redis specifically uses 16,384 of them.

Odyssey: Okay.

Coda: The key assignment is just a fast CRC-16 hash of the key, modulo 16384. And since that divisor, 16384, never changes, key lookup is a guaranteed lightning-fast 01. Ah, so stability comes from fixing the number of partitions themselves. The nodes are just containers for these slots?

Odyssey: Precisely. If you have 10 nodes, each node might hold around 1,638 slots. Scaling just involves migrating these atomic slot units between nodes, not individual keys. And you keep that 01 lookup speed because the client always calculates the slot ID first, no matter how many nodes are holding the slots.

Coda: Right. It trades the hash ring’s infinite flexibility for predictable, guaranteed 01 routing by using a static, predetermined granularity. So we have a clear spectrum of choices, though. A real spectrum of architectural trade-offs. You’ve got naive hashing, 01 lookup, but catastrophic churn risk. The dangerous one. Consistent hashing with V nodes, O log N lookup, minimal churn but complex state management.

Odyssey: That’s the choice for massive distributed stores.

Coda: Right. Then rendezvous hashing, O N lookup, superb uniformity, simple implementation, best for smaller, highly uniformed clusters. And finally, fixed slot partitioning, like Redis, 01 lookup, controlled churn but limited to that fixed granularity. Best for super fast key value stores. That spectrum perfectly illustrates the trade-offs engineers have to make constantly between speed, simplicity, and resilience. This whole deep dive really reveals that fundamental compromise in distributed systems, doesn’t it?

Odyssey: You cannot have guaranteed catastrophe free scaling without sacrificing the mathematical purity and speed of that simple 01 lookup. Consistent hashing is the acknowledgement that operational resilience has to take priority. It makes a strategic trade, accepting a slight manageable complexity increase, that O log N lookup, in exchange for minimizing the risk of that catastrophic disruption. It transforms scaling from a high-stakes gamble into a smooth, manageable process.

Coda: It does. And the most enduring lesson here, I think, is the V node imperative. You just cannot rely on the basic, fragile hash ring geometry for production stability. The risk of hotspots is just too high. V nodes are the necessary statistical correction. They introduce that randomness to achieve load uniformity. They allow for flexible capacity planning through weighting. And they ensure that when a node inevitably fails, the load is scattered across the remaining cluster, protecting the system from cascading failure.

Odyssey: It’s an elegant solution, solving a random geometric imbalance by injecting more but controlled statistical randomness. And that statistical principle gives us a really powerful final thought. The core design lesson of V nodes, using multiple distributed representations to ensure fairness and smoothly distribute load and risk, is applicable far beyond software. Oh, absolutely. Think about it in organizational structures. A system where all decisions or all expertise is centralized in one person or one team.

Coda: That’s a hotspot. It’s inherently fragile. A single point of failure. A robust, scalable organization, much like a VNode-enabled cluster, requires scattering key responsibilities and diversifying knowledge across many smaller independent teams.

Odyssey: That’s how you build resilience. A fascinating insight into how principles of robust engineering often mirror the best practices for organizational resilience. Thank you for joining us for this deep dive into consistent hashing. My pleasure. We’ll see you next time. Thank you.